- This event has passed.

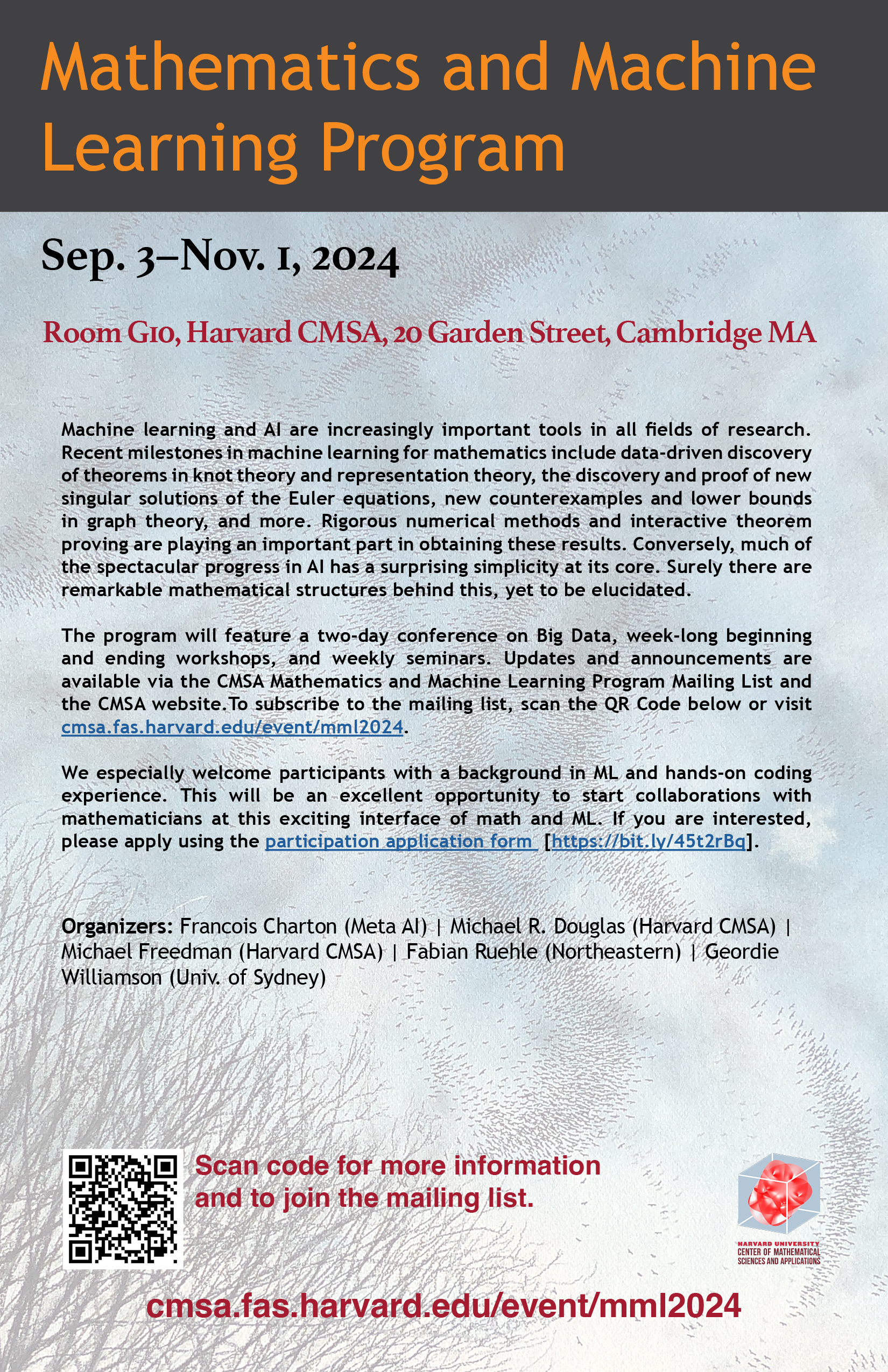

Mathematics and Machine Learning Program

Mathematics and Machine Learning Program

Dates: September 3 – November 1, 2024

Location: Harvard CMSA, 20 Garden Street, Cambridge, MA 0213

Machine learning and AI are increasingly important tools in all fields of research. Recent milestones in machine learning for mathematics include data-driven discovery of theorems in knot theory and representation theory, the discovery and proof of new singular solutions of the Euler equations, new counterexamples and lower bounds in graph theory, and more. Rigorous numerical methods and interactive theorem proving are playing an important part in obtaining these results. Conversely, much of the spectacular progress in AI has a surprising simplicity at its core. Surely there are remarkable mathematical structures behind this, yet to be elucidated.

September 3–5, 2024: Opening Workshop: AI for Mathematicians, with Leon Bottou, François Charton, David McAllester, Adam Wagner and Geordie Williamson. A series of six lectures covering logic and theorem proving, AI methods, theory of machine learning, two lectures on case studies in math-AI, and a lecture and discussion on open problems and the ethics of AI in science.

Opening Workshop Youtube Playlist

September 6–7, 2024: Big Data Conference

September 9–13, 2024: Applying Machine Learning to Math, with François Charton and Geordie Williamson

Public Lecture September 12, 2024: Geordie Williamson, University of Sydney: Can AI help with hard mathematics? (Youtube link)

The focus of this week will be on practical examples and techniques for the mathematics researcher keen to explore or deepen their use of AI techniques. We will have talks showcasing easily stated problems, on which machine learning techniques can be employed profitably. These provide excellent toy examples for generating intuition. We will also have expert talks on some of the technical subtleties which arise. There are several instances where the accepted heuristics emerging from the study of large language models (LLM) and image recognition don’t appear to apply on mathematics problems, and we will try to highlight these subtleties.

Applying Machine Learning to Math Youtube Playlist

September 16–20, 2024: Number theory, with Drew Sutherland

The focus of this week will be on the use of ML as a tool for finding and understanding statistical patterns in number-theoretic datasets, using the recently discovered (and still largely unexplained) “murmurations” in the distribution of Frobenius traces in families of elliptic curves and other arithmetic L-functions as a motivating example.

Number Theory Youtube Playlist

September 23–27, 2024: Knot theory, with Sergei Gukov

Knot theory is a great source of labeled data that can be synthetically generated. Moreover, many outstanding problems in knot theory and low-dimensional topology can be formulated as decision and classification tasks, e.g. “Is the knot 123_45 slice?” or “Can two given Kirby diagrams be related by a sequence of Kirby moves?” During this focus week we will explore various ways in which AI can be applied to problems in knot theory and how, based on these applications, mathematical reasoning can advance development of AI algorithms. Another goal will be to develop formal knot theory libraries (e.g. contributions to mathlib) and to apply AI models to formal proof systems, in particular in the context of knot theory.

Knot Theory Youtube Playlist

September 30: Teaching and Machine Learning Panel Discussion, 3:30-5:30 pm ET

September 30–October 4, 2024: Graph theory and combinatorics, with Adam Wagner

This week, we will consider how machine learning can help us solve problems in combinatorics and graph theory, broadly interpreted, in practice. The advantage of these fields is that they deal with finite objects that are simple to set up using computers, and programs that work for one problem can often be adapted to work for several other related problems as well. Many times, the best constructions for a problem are easy to interpret, making it simpler to judge how well a particular algorithm is performing. On the other hand, there are lots of open conjectures that are simple to state, for which the best-known constructions are counterintuitive, making it perhaps more likely that machine learning methods can spot patterns that are difficult to understand otherwise.

Graph Theory and Combinatorics Youtube Playlist

October 7–11, 2024: More number theory, with Drew Sutherland

The focus of this week will be on the use of AI as a tool to search for and/or construct interesting or extremal examples in number theory and arithmetic geometry, using LLM-based genetic algorithms, generative adversarial networks, game-theoretic methods, and heuristic tree pruning as alternatives to conventional local search strategies.

More Number Theory Youtube Playlist

October 14 –18, 2024: Interactive theorem proving

This week we will discuss the use of interactive theorem proving systems such as Lean, Coq and Isabelle in mathematical research, and AI systems which prove theorems and translate between informal and formal mathematics.

Interactive Theorem Proving Youtube Playlist

October 21–25, 2024: Numerical Partial Differential Equations (PDE), with Tristan Buckmaster and Javier Gomez-Serrano

The focus of this week will be on constructing solutions to partial differential equations and dynamical systems (finite and infinite dimensional) more broadly defined. We will discuss several toy problems and comment on issues like sampling strategies, optimization algorithms, ill-posedness, or convergence. We will also outline strategies about further developing machine-learning findings and turn them into mathematical theorems via computer-assisted approaches.

Numerical PDEs Youtube Playlist

October 28–Nov. 1, 2024: Closing Workshop: The closing workshop will provide a forum for discussing the most current research in these areas, including work in progress and recent results from program participants.

Math and Machine Learning Closing Workshop Youtube Playlist

September 3–Nov. 1: Graduate topics in deep learning theory (Boston College) taught by Eli Grigsby, held at the CMSA Tuesdays and Thursdays 2:30–3:45 pm Eastern Time. Course website (link).

Graduate Topics in Deep Learning Youtube Playlist

Course description: This is a course on geometric aspects of deep learning theory. Broadly speaking, we’ll investigate the question: How might human-interpretable concepts be expressed in the geometry of their data encodings, and how does this geometry interact with the computational units and higher-level algebraic structures in various parameterized function classes, especially neural network classes? During the portion of the course Sep. 3-Nov. 1, the course will be presented as part of the Math and Machine Learning program at the CMSA in Cambridge. During that portion, we will focus on the current state of research on mechanistic interpretability of transformers, the architecture underlying large language models like Chat-GPT.

Program Organizers

- Francois Charton (Meta AI)

- Michael R. Douglas (Harvard CMSA)

- Michael Freedman (Harvard CMSA)

- Fabian Ruehle (Northeastern)

- Geordie Williamson (Univ. of Sydney)

Program Schedule

Monday

10:30–noon

Open Discussion

Room G10

12:00–1:30 pm

Group lunch

CMSA Common Room

Tuesday

2:30–3:45 pm

Topics in deep learning theory

Room G10

4:00–5:00 pm

Open Discussion/Tea

CMSA Common Room

Wednesday

10:30 am–12:00 pm

Open Discussion

Room G10

2:00–3:00 pm

Room G10

Thursday

2:30–3:45 pm

Topics in deep learning theory

Room G10

Friday

10:30 am–12:00 pm

Open Discussion

Room G10

Harvard CMSA thanks Mistral AI for a generous donation of computing credit.

![]()