- This event has passed.

GRAMSIA: Graphical Models, Statistical Inference, and Algorithms

May 16, 2023 @ 9:00 am - May 19, 2023 @ 5:00 pm

On May 16 – May 19, 2023 the CMSA hosted a four-day workshop on GRAMSIA: Graphical Models, Statistical Inference, and Algorithms. The workshop was held in room G10 of the CMSA, located at 20 Garden Street, Cambridge, MA. This workshop was organized by David Gamarnik (MIT), Kavita Ramanan (Brown), and Prasad Tetali (Carnegie Mellon).

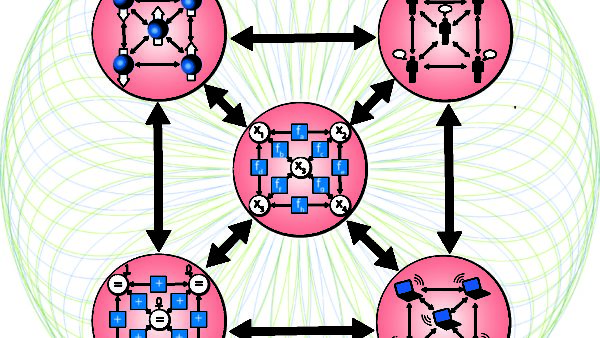

The purpose of this workshop is to highlight various mathematical questions and issues associated with graphical models and message-passing algorithms, and to bring together a group of researchers for discussion of the latest progress and challenges ahead. In addition to the substantial impact of graphical models on applied areas, they are also connected to various branches of the mathematical sciences. Rather than focusing on the applications, the primary goal is to highlight and deepen these mathematical connections.

Location: Room G10, CMSA, 20 Garden Street, Cambridge MA 02138

Speakers:

- Jake Abernethy (Georgia Tech)

- Guy Bresler (MIT)

- Florent Krzakala (Ecole Polytechnique Federale de Lausanne)

- Lester Mackey (Microsoft Research New England)

- Theo McKenzie (Harvard)

- Andrea Montanari (Stanford)

- Elchanan Mossel (MIT)

- Yury Polyanskiy (MIT)

- Patrick Rebeschini (Oxford)

- Subhabrata Sen (Harvard)

- Devavrat Shah (MIT)

- Pragya Sur (Harvard)

- Alex Wein (UC Davis)

- Yihong Wu (Yale)

- Sarath Yasodharan (Brown)

- Horng-Tzer Yau (Harvard)

- Christina Lee Yu (Cornell)

- Ilias Zadik (MIT)

Schedule:

Tuesday, May 16, 2023

| 9:00 am | Breakfast |

| 9:15 – 9:30 am | Introductory remarks by organizers |

| 9:30 – 10:20 am | Subhabrata Sen (Harvard)

Title: Mean-field approximations for high-dimensional Bayesian regression Abstract: Variational approximations provide an attractive computational alternative to MCMC-based strategies for approximating the posterior distribution in Bayesian inference. The Naive Mean-Field (NMF) approximation is the simplest version of this strategy—this approach approximates the posterior in KL divergence by a product distribution. There has been considerable progress recently in understanding the accuracy of NMF under structural constraints such as sparsity, but not much is known in the absence of such constraints. Moreover, in some high-dimensional settings, the NMF is expected to be grossly inaccurate, and advanced mean-field techniques (e.g. Bethe approximation) are expected to provide accurate approximations. We will present some recent work in understanding this duality in the context of high-dimensional regression. This is based on joint work with Sumit Mukherjee (Columbia) and Jiaze Qiu (Harvard University). |

| 10:30 – 11:00 am | Coffee break |

| 11:00 – 11:50 am | Elchanan Mossel (MIT)

Title: Some modern perspectives on the Kesten-Stigum bound for reconstruction on trees. Abstract: The Kesten-Stigum bound is a fundamental spectral bound for reconstruction on trees. I will discuss some conjectures and recent progress on understanding when it is tight as well as some conjectures and recent progress on what it signifies even in cases where it is not tight. |

| 12:00 – 2:00 pm | Lunch |

| 2:00 – 2:50 pm | Christina Lee Yu (Cornell)

Title: Exploiting Neighborhood Interference with Low Order Interactions under Unit Randomized Design Abstract: Network interference, where the outcome of an individual is affected by the treatment assignment of those in their social network, is pervasive in many real-world settings. However, it poses a challenge to estimating causal effects. We consider the task of estimating the total treatment effect (TTE), or the difference between the average outcomes of the population when everyone is treated versus when no one is, under network interference. Under a Bernoulli randomized design, we utilize knowledge of the network structure to provide an unbiased estimator for the TTE when network interference effects are constrained to low order interactions among neighbors of an individual. We make no assumptions on the graph other than bounded degree, allowing for well-connected networks that may not be easily clustered. Central to our contribution is a new framework for balancing between model flexibility and statistical complexity as captured by this low order interactions structure. |

| 3:00 – 3:30 pm | Coffee break |

| 3:30 – 4:20 pm | Theo McKenzie (Harvard)

Title: Spectral statistics for sparse random graphs Abstract: Understanding the eigenvectors and eigenvalues of the adjacency matrix of random graphs is fundamental to many algorithmic questions; moreover, it is related to longstanding questions in quantum physics. In this talk we focus on random models of sparse graphs, giving some properties that are unique to these sparse graphs, as well as some specific obstacles. Based on this, we show some new results on spectral statistics of sparse random graphs, as well as some conjectures. |

| 4:40 – 6:30 pm | Lightning talk session + welcome reception |

Wednesday, May 17, 2023

| 9:00 am | Breakfast |

| 9:30 – 10:20 | Ilias Zadik (MIT)

Title: Revisiting Jerrum’s Metropolis Process for the Planted Clique Problem Abstract: Jerrum in 1992 (co-)introduced the planted clique model by proving the (worst-case initialization) failure of the Metropolis process to recover any o(sqrt(n))-sized clique planted in the Erdos-Renyi graph G(n,1/2). This result is classically cited in the literature of the problem, as the “first evidence” the o(sqrt(n))-sized planted clique recovery task is “algorithmically hard”. |

| 10:30 – 11:00 | Coffee break |

| 11:00 – 11:50 | Florent Krzakala (Ecole Polytechnique Federale de Lausanne)

Title: Are Gaussian data all you need for machine learning theory? Abstract: Clearly, no! Nevertheless, the Gaussian assumption remains prevalent among theoreticians, particularly in high-dimensional statistics and physics, less so in traditional statistical learning circles. To what extent are Gaussian features merely a convenient choice for certain theoreticians, or genuinely an effective model for learning? In this talk, I will review recent progress on these questions, achieved using rigorous probabilistic approaches in high-dimension and techniques from mathematical statistical physics. I will demonstrate that, despite its apparent limitations, the Gaussian approach is sometimes much closer to reality than one might expect. In particular, I will discuss key findings from a series of recent papers that showcase the Gaussian equivalence of generative models, the universality of Gaussian mixtures, and the conditions under which a single Gaussian can characterize the error in high-dimensional estimation. These results illuminate the strengths and weaknesses of the Gaussian assumption, shedding light on its applicability and limitations in the realm of theoretical statistical learning. |

| 12:00 – 2:00 pm | Lunch |

| 2:00 – 2:50 pm | Andrea Montanari (Stanford)

Title: Dimension free ridge regression Abstract: Random matrix theory has become a widely useful tool in high-dimensional statistics and theoretical machine learning. However, random matrix theory is largely focused on the proportional asymptotics in which the number of columns grows proportionally to the number of rows of the data matrix. This is not always the most natural setting in statistics where columns correspond to covariates and rows to samples. With the objective to move beyond the proportional asymptotics, we revisit ridge regression. We allow the feature vector to be high-dimensional, or even infinite-dimensional, in which case it belongs to a separable Hilbert space and assume it to satisfy a certain convex concentration property. Within this setting, we establish non-asymptotic bounds that approximate the bias and variance of ridge regression in terms of the bias and variance of an ‘equivalent’ sequence model (a regression model with diagonal design matrix). Previously, such an approximation result was known only in the proportional regime and only up to additive errors: in particular, it did not allow to characterize the behavior of the excess risk when this converges to 0. Our general theory recovers earlier results in the proportional regime (with better error rates). As a new application, we obtain a completely explicit and sharp characterization of ridge regression for Hilbert covariates with regularly varying spectrum. Finally, we analyze the overparametrized near-interpolation setting and obtain sharp ‘benign overfitting’ guarantees. [Based on joint work with Chen Cheng] |

| 3:00 – 3:50 pm | Yury Polyanskiy (MIT)

Title: Recent results on broadcasting on trees and stochastic block model Abstract: I will survey recent results and open questions regarding the q-ary stochastic block model and its local version (broadcasting on trees, or BOT). For example, establishing uniqueness of non-trivial solution to distribution recursions (BP fixed point) implies a characterization for the limiting mutual information between the graph and community labels. For q=2 uniqueness holds in all regimes. For q>2 uniqueness is currently only proved above a certain threshold that is asymptotically (for large q) is close to Kesten-Stigum (KS) threshold. At the same time between the BOT reconstruction and KS we show that uniqueness does not hold, at least in the presence of (arbitrary small) vertex-level side information. I will also discuss extension of the robust reconstruction result of Janson-Mossel’2004. Based on joint works with Qian Yu (Princeton) and Yuzhou Gu (MIT). |

| 4:00 – 4:30 pm | Coffee break |

| 4:30 – 5:20 pm | Alex Wein (UC Davis)

Title: Is Planted Coloring Easier than Planted Clique? Abstract: The task of finding a planted clique in the random graph G(n,1/2) is perhaps the canonical example of a statistical-computational gap: for some clique sizes, the task is statistically possible but believed to be computationally hard. Really, there are multiple well-studied tasks related to the planted clique model: detection, recovery, and refutation. While these are equally difficult in the case of planted clique, this need not be true in general. In the related planted coloring model, I will discuss the computational complexity of these three tasks and the interplay among them. Our computational hardness results are based on the low-degree polynomial model of computation.By taking the complement of the graph, the planted coloring model is analogous to the planted clique model but with many planted cliques. Here our conclusion is that adding more cliques makes the detection problem easier but not the recovery problem. |

Thursday, May 18, 2023

| 9:00 | Breakfast |

| 9:30 – 10:20 | Guy Bresler (MIT)

Title: Algorithmic Decorrelation and Planted Clique in Dependent Random Graphs Abstract: There is a growing collection of average-case reductions starting from Planted Clique (or Planted Dense Subgraph) and mapping to a variety of statistics problems, sharply characterizing their computational phase transitions. These reductions transform an instance of Planted Clique, a highly structured problem with its simple clique signal and independent noise, to problems with richer structure. In this talk we aim to make progress in the other direction: to what extent can these problems, which often have complicated dependent noise, be transformed back to Planted Clique? Such a bidirectional reduction between Planted Clique and another problem shows a strong computational equivalence between the two problems. We develop a new general framework for reasoning about the validity of average-case reductions based on low sensitivity to perturbations. As a concrete instance of our general result, we consider the planted clique (or dense subgraph) problem in an ambient graph that has dependent edges induced by randomly adding triangles to the Erdos-Renyi graph G(n,p), and show how to successfully eliminate dependence by carefully removing the triangles while approximately preserving the clique (or dense subgraph). Joint work with Chenghao Guo and Yury Polyanskiy. |

| 10:30 – 11:00 | Coffee break |

| 11:00 – 11:50 | Sarath Yasodharan (Brown)

Title: A Sanov-type theorem for unimodular marked random graphs and its applications Abstract: We prove a Sanov-type large deviation principle for the component empirical measures of certain sequences of unimodular random graphs (including Erdos-Renyi and random regular graphs) whose vertices are marked with i.i.d. random variables. Specifically, we show that the rate function can be expressed in a fairly tractable form involving suitable relative entropy functionals. As a corollary, we establish a variational formula for the annealed pressure (or limiting log partition function) for various statistical physics models on sparse random graphs. This is joint work with I-Hsun Chen and Kavita Ramanan. |

| 12:00 – 12:15 pm

12:15 – 2:00 pm |

Group Photo

Lunch |

| 2:00 – 2:50 pm | Patrick Rebeschini (Oxford)

Title: Implicit regularization via uniform convergence Abstract: Uniform convergence is one of the main tools to analyze the complexity of learning algorithms based on explicit regularization, but it has shown limited applicability in the context of implicit regularization. In this talk, we investigate the statistical guarantees on the excess risk achieved by early-stopped mirror descent run on the unregularized empirical risk with the squared loss for linear models and kernel methods. We establish a direct link between the potential-based analysis of mirror descent from optimization theory and uniform learning. This link allows characterizing the statistical performance of the path traced by mirror descent directly in terms of localized Rademacher complexities of function classes depending on the choice of the mirror map, initialization point, step size, and the number of iterations. We will discuss other results along the way. |

| 3:00 – 3:50 pm | Pragya Sur (Harvard)

Title: A New Central Limit Theorem for the Augmented IPW estimator in high dimensions Abstract: Estimating the average treatment effect (ATE) is a central problem in causal inference. Modern advances in the field studied estimation and inference for the ATE in high dimensions through a variety of approaches. Doubly robust estimators such as the augmented inverse probability weighting (AIPW) form a popular approach in this context. However, the high-dimensional literature surrounding these estimators relies on sparsity conditions, either on the outcome regression (OR) or the propensity score (PS) model. This talk will introduce a new central limit theorem for the classical AIPW estimator, that applies agnostic to such sparsity-type assumptions. Specifically, we will study properties of the cross-fit version of the estimator under well-specified OR and PS models, and the proportional asymptotics regime where the number of confounders and sample size diverge proportional to each other. Under assumptions on the covariate distribution, our CLT will uncover two crucial phenomena among others: (i) the cross-fit AIPW exhibits a substantial variance inflation that can be quantified in terms of the signal-to-noise ratio and other problem parameters, (ii) the asymptotic covariance between the estimators used while cross-fitting is non-negligible even on the root-n scale. These findings are strikingly different from their classical counterparts, and open a vista of possibilities for studying similar other high-dimensional effects. On the technical front, our work utilizes a novel interplay between three distinct tools—approximate message passing theory, the theory of deterministic equivalents, and the leave-one-out approach. |

| 4:00 – 4:30 pm | Coffee break |

| 4:30 – 5:20 pm | Yihong Wu (Yale)

Title: Random graph matching at Otter’s threshold via counting chandeliers |

Friday, May 19, 2023

| 9:00 | Breakfast |

| 9:30 – 10:20 | Jake Abernethy (Georgia Tech)

Title: Optimization, Learning, and Margin-maximization via Playing Games Abstract: A very popular trick for solving certain types of optimization problems is this: write your objective as the solution of a two-player zero-sum game, endow both players with an appropriate learning algorithm, watch how the opponents compete, and extract an (approximate) solution from the actions/decisions taken by the players throughout the process. This approach is very generic and provides a natural template to produce new and interesting algorithms. I will describe this framework and show how it applies in several scenarios, including optimization, learning, and margin-maximiation problems. Along the way we will encounter a number of novel tools and rediscover some classical ones as well. |

| 10:30 – 11:00 | Coffee break |

| 11:00 – 11:50 | Devavrat Shah (MIT)

Title: On counterfactual inference with unobserved confounding via exponential family Abstract: We are interested in the problem of unit-level counterfactual inference with unobserved confounders owing to the increasing importance of personalized decision-making in many domains: consider a recommender system interacting with a user over time where each user is provided recommendations based on observed demographics, prior engagement levels as well as certain unobserved factors. The system adapts its recommendations sequentially and differently for each user. Ideally, at each point in time, the system wants to infer each user’s unknown engagement if it were exposed to a different sequence of recommendations while everything else remained unchanged. This task is challenging since: (a) the unobserved factors could give rise to spurious associations, (b) the users could be heterogeneous, and (c) only a single trajectory per user is available. We model the underlying joint distribution through an exponential family. This reduces the task of unit-level counterfactual inference to simultaneously learning a collection of distributions of a given exponential family with different unknown parameters with single observation per distribution. We discuss a computationally efficient method for learning all of these parameters with estimation error scaling linearly with the metric entropy of the space of unknown parameters – if the parameters are an s-sparse linear combination of k known vectors in p dimension, the error scales as O(s log k/p). En route, we derive sufficient conditions for compactly supported distributions to satisfy the logarithmic Sobolev inequality. Based on a joint work with Raaz Dwivedi, Abhin Shah and Greg Wornell (all at MIT) with manuscript available here: https://arxiv.org/abs/2211.08209 |

| 12:00 – 2:00 pm | Lunch |

| 2:00 – 2:50 pm | Lester Mackey (Microsoft Research New England)

Title: Advances in Distribution Compression Abstract: This talk will introduce two new tools for summarizing a probability distribution more effectively than independent sampling or standard Markov chain Monte Carlo thinning: |

| 3:00 – 3:30 pm | Coffee break |

| 3:30 – 4:20 pm | Horng-Tzer Yau (Harvard)

Title: On the spectral gap of mean-field spin glass models. Abstract: We will discuss recent progress regarding spectral gaps for the Glauber dynamics of spin glasses at high temperature. In addition, we will also report on estimating the operator norm of the covariance matrix for the SK model. |

Moderators: Benjamin McKenna, Harvard CMSA & Changji Xu, Harvard CMSA