- This event has passed.

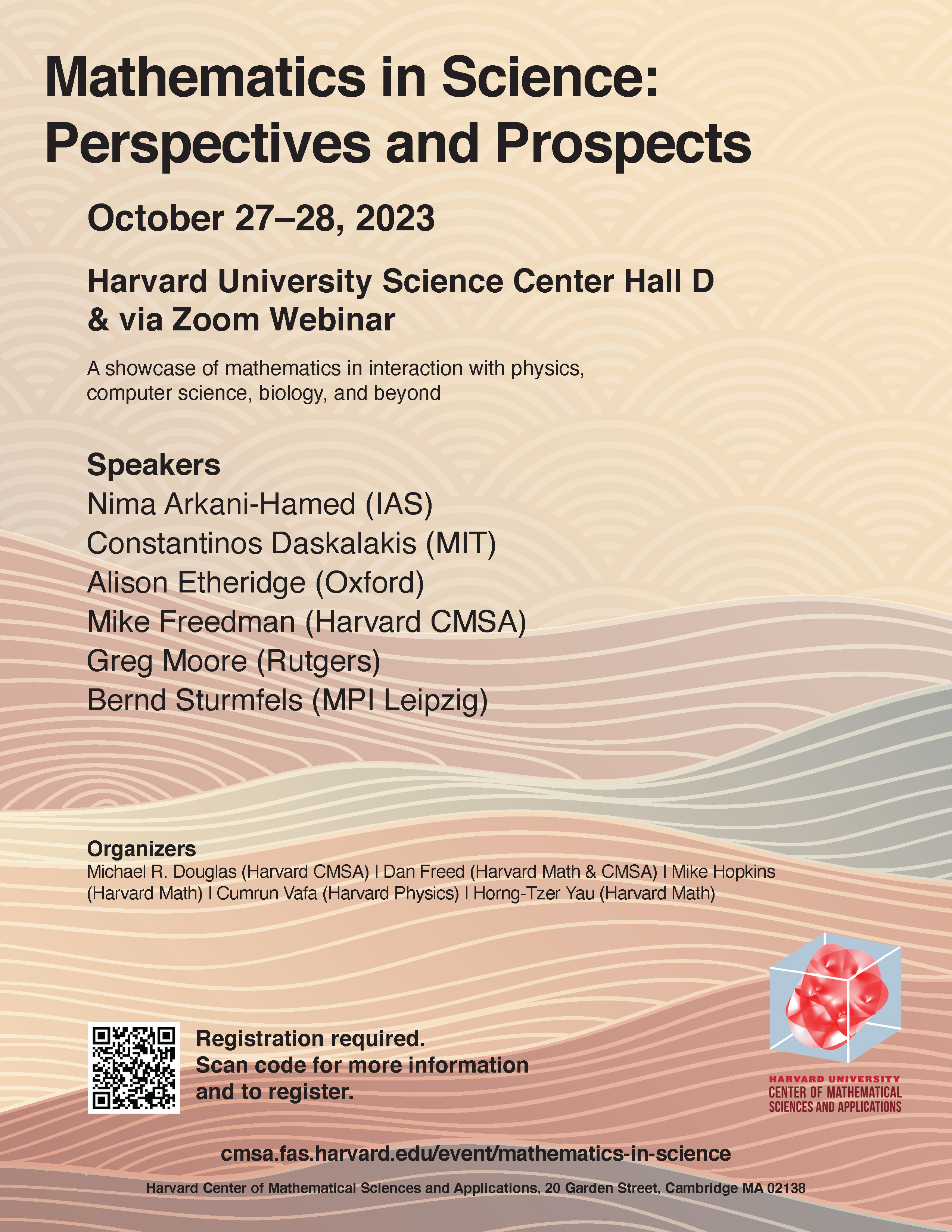

Mathematics in Science: Perspectives and Prospects

October 27, 2023 - October 28, 2023

A showcase of mathematics in interaction with physics, computer science, biology, and beyond.

October 27–28, 2023

Location: Harvard University Science Center Hall D & via Zoom.

Directions and Recommended Lodging

Mathematics in Science: Perspectives and Prospects Youtube Playlist

Speakers

- Nima Arkani-Hamed (IAS)

- Constantinos Daskalakis (MIT)

- Alison Etheridge (Oxford)

- Mike Freedman (Harvard CMSA)

- Greg Moore (Rutgers)

- Bernd Sturmfels (MPI Leipzig)

Organizers

- Michael R. Douglas (Harvard CMSA)

- Dan Freed (Harvard Math & CMSA)

- Mike Hopkins (Harvard Math)

- Cumrun Vafa (Harvard Physics)

- Horng-Tzer Yau (Harvard Math)

Schedule

Friday, October 27, 2023

| 2:00–3:15 pm |

Greg Moore (Rutgers) Title: Remarks on Physical Mathematics Abstract: I will describe some examples of the vigorous modern dialogue between mathematics and theoretical physics (especially high energy and condensed matter physics). I will begin by recalling Stokes’ phenomenon and explain how it is related to some notable developments in quantum field theory from the past 30 years. Time permitting, I might also say something about the dialogue between mathematicians working on the differential topology of four-manifolds and physicists working on supersymmetric quantum field theories. But I haven’t finished writing the talk yet, so I don’t know how it will end any more than you do. |

| 3:15–3:45 pm | Break |

| 3:45–5:00 pm |

Bernd Sturmfels (MPI Leipzig) Title: Algebraic Varieties in Quantum Chemistry Abstract: We discuss the algebraic geometry behind coupled cluster (CC) theory of quantum many-body systems. The high-dimensional eigenvalue problems that encode the electronic Schroedinger equation are approximated by a hierarchy of polynomial systems at various levels of truncation. The exponential parametrization of the eigenstates gives rise to truncation varieties. These generalize Grassmannians in their Pluecker embedding. We explain how to derive Hamiltonians, we offer a detailed study of truncation varieties and their CC degrees, and we present the state of the art in solving the CC equations. This is joint work with Fabian Faulstich and Svala Sverrisdóttir. |

Saturday, October 28, 2023

| 9:00 am | Breakfast |

| 9:30–10:45 am |

Mike Freedman (Harvard CMSA) Title: ML, QML, and Dynamics: What mathematics can help us understand and advance machine learning? Abstract: Vannila deep neural nets DNN repeatedly stretch and fold. They are reminiscent of the logistic map and the Smale horseshoe. What kind of dynamics is responsible for their expressivity and trainability. Is chaos playing a role? Is the Kolmogorov Arnold representation theorem relevant? Large language models are full of linear maps. Might we look for emergent tensor structures in these highly trained maps in analogy with emergent tensor structures at local minima of certain loss functions in high-energy physics. |

| 10:45–11:15 am | Break |

| 11:15 am–12:30 pm via Zoom |

Nima Arkani-Hamed (IAS) Title: All-Loop Scattering as A Counting Problem Abstract: I will describe a new understanding of scattering amplitudes based on fundamentally combinatorial ideas in the kinematic space of the scattering data. I first discuss a toy model, the simplest theory of colored scalar particles with cubic interactions, at all loop orders and to all orders in the topological ‘t Hooft expansion. I will present a novel formula for loop-integrated amplitudes, with no trace of the conventional sum over Feynman diagrams, but instead determined by a beautifully simple counting problem attached to any order of the topological expansion. A surprisingly simple shift of kinematic variables converts this apparent toy model into the realistic physics of pions and Yang-Mills theory. These results represent a significant step forward in the decade-long quest to formulate the fundamental physics of the real world in a new language, where the rules of spacetime and quantum mechanics, as reflected in the principles of locality and unitarity, are seen to emerge from deeper mathematical structures. |

| 12:30–2:00 pm | Lunch break |

| 2:00–3:15 pm |

Constantinos Daskalakis (MIT) Title: How to train deep neural nets to think strategically Abstract: Many outstanding challenges in Deep Learning lie at its interface with Game Theory: from playing difficult games like Go to robustifying classifiers against adversarial attacks, training deep generative models, and training DNN-based models to interact with each other and with humans. In these applications, the utilities that the agents aim to optimize are non-concave in the parameters of the underlying DNNs; as a result, Nash equilibria fail to exist, and standard equilibrium analysis is inapplicable. So how can one train DNNs to be strategic? What is even the goal of the training? We shed light on these challenges through a combination of learning-theoretic, complexity-theoretic, game-theoretic and topological techniques, presenting obstacles and opportunities for Deep Learning and Game Theory going forward. |

| 3:15–3:45 pm | Break |

| 3:45–5:00 pm |

Alison Etheridge (Oxford) Title: Modelling hybrid zones Abstract: Mathematical models play a fundamental role in theoretical population genetics and, in turn, population genetics provides a wealth of mathematical challenges. In this lecture we investigate the interplay between a particular (ubiquitous) form of natural selection, spatial structure, and, if time permits, so-called genetic drift. A simple mathematical caricature will uncover the importance of the shape of the domain inhabited by a species for the effectiveness of natural selection. |

Limited funding to help defray travel expenses is available for graduate students and recent PhDs. If you are a graduate student or postdoc and would like to apply for support, please register above and send an email to mathsci2023@cmsa.fas.

Please include your name, address, current status, university affiliation, citizenship, and area of study. F1 visa holders are eligible to apply for support. If you are a graduate student, please send a brief letter of recommendation from a faculty member to explain the relevance of the conference to your studies or research. If you are a postdoc, please include a copy of your CV.